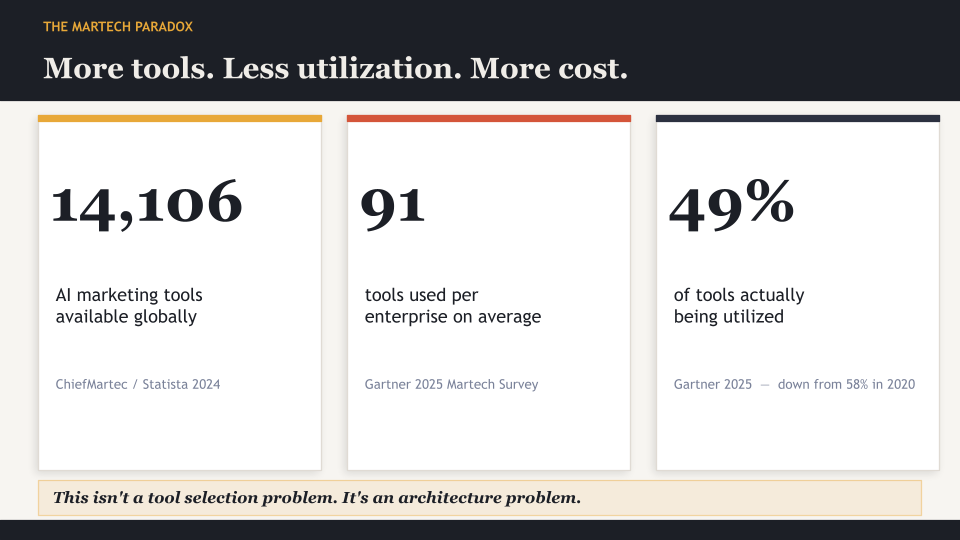

There are 14,106 marketing technology solutions available today. The average enterprise marketing organization uses 91 of them. And according to Gartner, less than half of those tools are being used to their actual capability.

That is not a discovery problem. It is not a budget problem. It is an architecture problem.

Most growth-stage marketing teams are not under-tooled. They are over-tooled and under-connected. They have AI writing assistants, AI ad platforms, AI analytics dashboards, and AI email tools, all purchased to solve real problems, and none of them talk to each other in any meaningful way. The team spends more time moving context between platforms than acting on what those platforms produce.

That is a tool stack. And a tool stack, no matter how many AI features it contains, is not a marketing system.

If your team is in this position, the session More Output, Same Team shows exactly what the operational alternative looks like, built live from a blank screen.

But first, let’s establish the distinction that the industry has largely failed to make clearly.

What Is AI Marketing, Really?

Before getting into systems and stacks, it helps to ground the definition. So what is AI marketing at the functional level?

AI marketing is the application of machine learning, predictive modeling, and automation to the decisions and tasks that make up a marketing operation. In practice, this includes things like automated bid management in paid media, predictive lead scoring, personalized content delivery, and AI-generated campaign assets. These are not theoretical capabilities. They are available today across most of the major marketing AI tools in the market.

The problem is that most organizations treat these capabilities as independent solutions to independent problems. They buy an AI tool for paid media, another for content, another for analytics, and another for outreach. Each solves something. None of them is compound.

That is the difference between AI marketing tools and an AI marketing system. Tools solve tasks. Systems solve problems by connecting decisions.

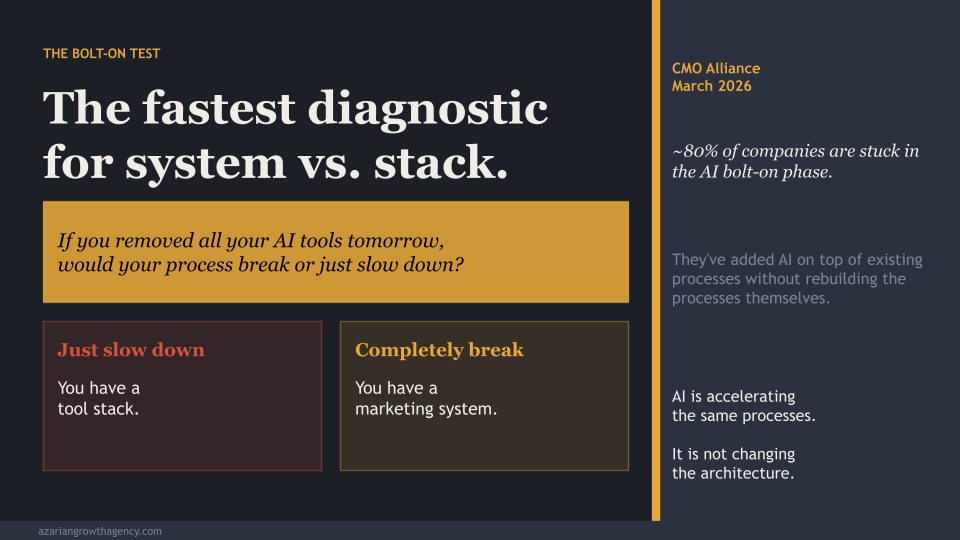

The Bolt-On Test

The CMO Alliance put forward what is, in the research, the clearest diagnostic for this distinction.

Ask yourself: if you removed all of your AI tools tomorrow, what would happen? Would your marketing process break? Or would it just slow down?

If it would just slow down, you have a tool stack. The bolt-on test exposes something important: most teams have added AI on top of existing processes without rebuilding the processes themselves. The AI writes faster. The AI optimizes bids. But the underlying workflow, the way information flows, the way decisions get made, and the way outcomes feed back into future decisions, is unchanged.

That is what the CMO Alliance means when they describe roughly 80% of companies as stuck in “the AI bolt-on phase.” AI is accelerating the same processes. It is not changing the architecture.

An AI marketing system, by contrast, is embedded in the architecture. If you removed it, the operation would not just slow down. It would stop functioning in its current form because the system is not layered on top of the process. It IS the process.

Why the Martech Stack Keeps Failing

The failure rate of AI marketing initiatives is not abstract. BCG’s research, published in early 2026, found something counterintuitive: workers using three or fewer AI tools reported improved efficiency. Productivity dropped sharply for workers using four or more. The research named the phenomenon “AI Brain Fry.” More tools, more cognitive load, more decisions about which output to trust, more time spent managing the stack rather than executing the strategy.

This lands differently when you see the martech utilization numbers. Gartner tracks this annually. In 2025, martech utilization sat at 49%. Organizations are using less than half of what they’re paying for. A mid-market company spending $850,000 in software licenses is carrying a total annual stack cost closer to $2.1 million once integration work, training, and support are loaded in. That gap between license cost and total cost is one of the most consistently underestimated line items in marketing operations.

The reason utilization stays low is not that marketers are lazy or resistant to technology. WalkMe’s 2025 research found that 71% of office workers believe new AI tools are emerging faster than they can learn to use them, and nearly 60% admit it often takes longer to figure out a new AI tool than to just complete the task manually. Forty-five percent have pretended to know how to use an AI tool in a meeting.

These are not technology skeptics. These are capable professionals overwhelmed by fragmented systems.

And the fragmentation is the core issue. Sixty-five percent of marketers cite integration as their top challenge. Forrester Research found that 68% of mid-market martech stacks have three or more tools performing the same function with minimal differentiation. The cost of tool sprawl is not just in the tools you’re not using. It’s in the coordination work your team is doing manually to compensate for the gaps between them.

What an AI Marketing System Actually Is

The definition that holds up best across the practitioner literature comes from Donella Meadows’ foundational work on systems thinking. A system is more than a collection of things. It is an interconnected set of elements, coherently organized to achieve something. The behaviors that emerge from a system come from the interactions between its elements, not from any individual element in isolation.

Apply that to marketing. A collection of AI tools that each solve individual problems and report individual metrics is not a system. An AI marketing system is one where data, decisions, execution, and feedback are interconnected, and where the output of each component becomes an input to the others.

Transparent Partners articulates this as five structural requirements. An AI-ready marketing architecture needs: a centralized data foundation that treats customer data as a shared enterprise asset rather than tool-specific property; intelligence separated from execution, meaning decision logic lives in shared services rather than inside individual channel platforms; explicit orchestration that defines signals, decision points, and actions across the system; human-AI collaboration by design rather than by accident; and governance that accelerates rather than restricts progress.

Their key observation: “AI doesn’t demand more marketing technology. It demands better marketing architecture.”

This is exactly right, and it changes how you evaluate your current setup. The question is not “do we have the right tools?” The question is “do our tools share data, inform each other’s decisions, and produce compound learning over time?”

If the answer is no, you have a stack. The automated marketing platform question is really an architecture question.

The Four Layers of an AI Marketing System

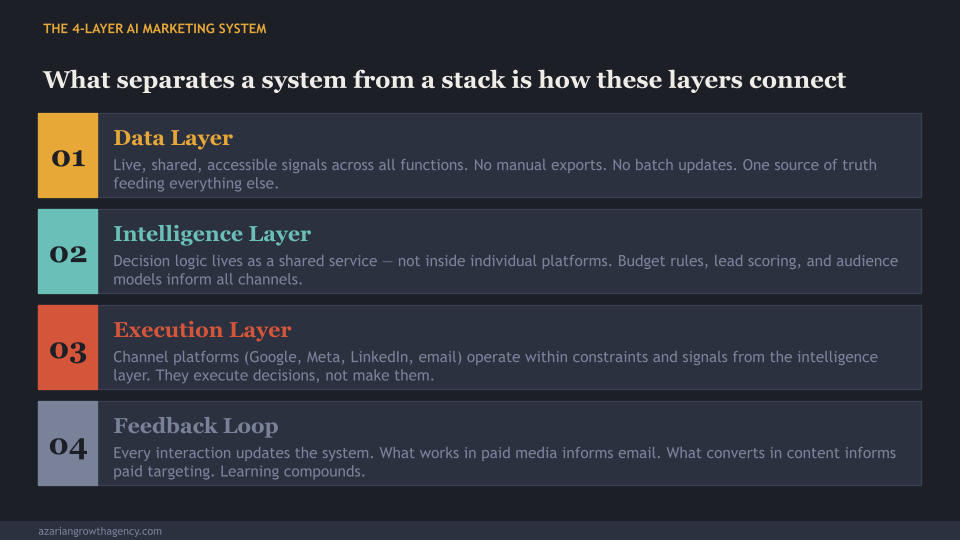

The CMO Alliance’s framework for an AI Growth Operating System maps closely to what the practitioner research consistently describes. It breaks into four connected layers.

The Data Layer is the foundation. This is not about having data. Every team has data. It is about having data that is live, shared, and accessible across all functions without manual export and import cycles. The difference between a team that acts on last week’s data and a team that acts on last hour’s signals is the difference between a database and a data layer. Ramp, which scaled from zero to $700 million in annual recurring revenue in roughly three years, built on Snowflake, with dbt models feeding Hightouch to push signals directly into Salesforce and HubSpot. Their stated service level agreement: product-usage signal to sales rep task in under one hour.

The Intelligence Layer is where decisions get made. In a fragmented stack, intelligence lives inside individual platforms. Google’s Smart Bidding makes decisions about Google ads. Meta’s Advantage+ makes decisions about Meta ads. These decisions don’t talk to each other, don’t share context about the customer journey, and don’t align on shared business objectives. In a system, intelligence is a shared service. Budget allocation logic, audience expansion rules, and lead scoring models sit above the channel level and inform execution across the entire operation.

The Execution Layer is where channel-specific platforms operate, but within the constraints and signals passed down from the intelligence layer. Campaign managers, ad platforms, email systems, and content tools execute decisions rather than make them. This is the layer where most teams live exclusively, which is why adding more tools to the execution layer never produces system-level results.

The Feedback Loop is what makes the whole thing compound. Duct Tape Marketing frames this as the shift from “campaigns you launch” to “systems you operate.” In a system, every interaction feeds back into the model. An ad that converts well on LinkedIn updates the audience model that informs the email segmentation. A content piece that drives qualified traffic updates the topic authority model that informs future production. Without the feedback loop, learning stays siloed inside individual tools and never improves the system’s overall performance.

What Ramp Actually Built

Ramp is the most instructive growth-stage example in the current market because it is the same company profile as most Azarian Growth Agency clients, just slightly further along in the journey.

Their growth architecture starts with a single North Star metric: dollars of SQL pipeline. Every growth activity across marketing, product, sales, and data teams is measured in terms of that metric. This is not a dashboard convention. It is a structural decision that forces every tool and every team to speak the same language.

From that foundation, they built a machine learning model that now predicts 75% of future SQLs before a sales rep clicks send. The data pipeline runs continuously. The intelligence layer updates the execution layer automatically. The feedback loop closes in under an hour.

That is an AI marketing strategy built as infrastructure, not as a tool purchase. The result: they ran over 100 experiments per quarter, with losing tests automatically sunset on Fridays. The team wasn’t bigger than comparable companies. The system was faster and more connected.

Airbnb built something structurally similar for personalization. Their User Signals Platform processes one million events per second with sub-second end-to-end latency. Engineers define new signals via configuration files without needing to rebuild the underlying infrastructure. The platform is not a collection of analytics tools. It is a shared data and decision layer that all marketing and product functions draw from.

Do You Have a System or a Stack? Seven Questions

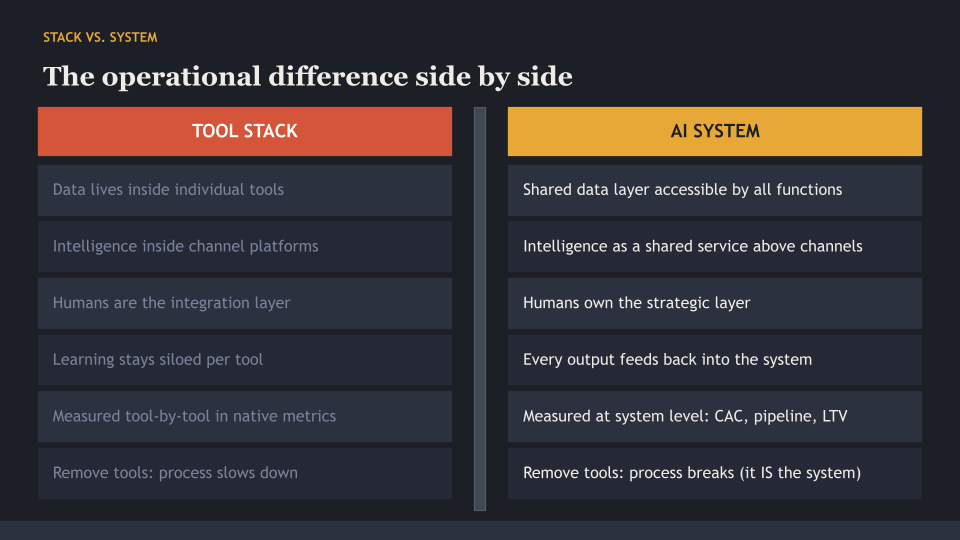

This is the diagnostic framework that the research consistently points toward. Answer these honestly.

Question 1: Can you trace a complete customer journey without stitching together reports from different platforms? If you need to manually combine exports from three tools to understand what happened, you do not have a unified data layer.

Question 2: Does intelligence live inside channel platforms or in shared services? If Google optimizes Google and Meta optimizes Meta with no shared context, you have execution without coordination.

Question 3: Is your data updated continuously or in weekly batch cycles? Real-time signal processing is a system requirement. Weekly batch updates are a stack characteristic.

Question 4: Do you have explicit orchestration rules, or is workflow documentation informal and living in someone’s head? Systems are documented and repeatable. Stacks are institutional knowledge.

Question 5: When one channel learns something useful, does that learning automatically reach other channels? If your paid media team’s findings about what headline converts don’t automatically inform your email team’s subject line testing, you have disconnected loops.

Question 6: Can a new team member understand how marketing decisions flow without interviewing five people? If the answer is no, the system exists in your people, not in your architecture.

Question 7: Are you measuring individual tool performance or system-level outcomes? Reporting in terms of CAC, pipeline contribution, and LTV:CAC ratio across the whole system is a system-level measurement. Reporting tool-by-tool in their native metrics is a stack-level measurement.

If you answered no to four or more of these, you have a stack. That is not a failure. It is a starting point. But it explains why adding more AI-powered marketing tools has not produced proportional results.

What Changes When You Build a System

Duct Tape Marketing describes the shift as moving execution from human throughput to machine throughput, while humans move up the stack to judgment, strategy, and narrative. This is not a reduction in the human role. It is a reallocation.

In a stack, humans ARE the integration layer. They manually move context between tools, reconcile conflicting dashboards, and make judgment calls about which AI output to trust. This is expensive, slow, and error-prone. It is also the reason lean teams hit capacity ceilings that more headcount alone cannot solve.

In a system, humans own the strategic layer. They set constraints, define brand guardrails, approve positioning, evaluate creative direction, and review anomalies when the system flags them. The connective tissue handles data flow and orchestration. The team’s cognitive load drops because the system handles the coordination that humans were doing manually.

The HubSpot evolution illustrates this at the platform level. At their 2025 INBOUND conference, CEO Yamini Rangan described the shift to “The Loop” as the operating principle for the agentic AI era. HubSpot’s 2025 product releases included over 200 new products oriented around shared data infrastructure (Data Hub, Smart CRM), AI agents working within the shared system (Breeze agents), and measurement that reports across the system rather than tool by tool.

The platform is moving toward system architecture because that is where the ai marketing ROI actually materializes. Not in the individual features. In the compounding of connected decisions.

Why Agentic AI Makes This Distinction Mandatory

The timing matters. Gartner predicts that by 2028, 60% of brands will use agentic AI for streamlined one-to-one interactions. By 2027, they project that the lack of AI literacy will be a top-three reason enterprise CMOs are replaced.

Agentic AI, the use of AI systems that can take multi-step actions autonomously, requires a system architecture to work. An AI agent writing campaign copy needs access to brand guidelines, performance data, audience intelligence, and competitive context simultaneously. It cannot function inside a fragmented stack where that information lives in four different tools that don’t communicate. An automated marketing platform is not enough. The architecture underneath it has to be coherent.

The teams that will absorb agentic AI as it matures are the teams that have already built the data foundation, the shared intelligence layer, and the orchestration framework. The teams still managing tool stacks will face agentic AI the same way they faced every previous wave of AI tools: impressive demos, real adoption friction, and results that don’t compound.

This is not a 2028 problem. The architectural decisions that determine which side of that line you’re on are being made right now.

About Azarian Growth Agency

Azarian Growth Agency is a full-funnel, AI-native growth marketing agency founded by Hamlet Azarian. We work with growth-stage companies across SaaS, fintech, B2B tech, and e-commerce to build the marketing infrastructure that connects paid media, analytics, and pipeline to measurable revenue outcomes.

The distinction this post draws between systems and stacks is not theoretical for us. It is the operational premise of every engagement we run. We do not add AI tools on top of existing workflows and call it a transformation. We build the data layer, the orchestration logic, the measurement framework, and the feedback loops that make AI-powered marketing actually compound.

Our team has helped companies raise over $269 million by building marketing engines that scale output without scaling headcount. The work starts with architecture, not with tool selection.

If you want to see what this looks like in practice, the More Output, Same Team session is a live demonstration of exactly that. Not a slide deck. Not a vendor overview. A working AI marketing system built from scratch so you can evaluate the approach before committing to anything.