In November 2025, a developer named Peter Steinberger released an open-source project that connected an AI assistant to WhatsApp, Slack, and a dozen other messaging platforms. Within three months, OpenClaw had accumulated 196,000 GitHub stars, making it the fastest-growing AI project in history. By February 2026, Sam Altman had hired Steinberger to OpenAI.

Meanwhile, a Czech developer named Jan Tomášek was quietly building something arguably more radical: Agent Zero, an AI framework where the agent doesn’t use pre-built tools; it writes its own. Need to analyze competitor data? It writes the Python script. Need a report? It creates the template. Need an API integration that doesn’t exist? It reads the docs and builds one.

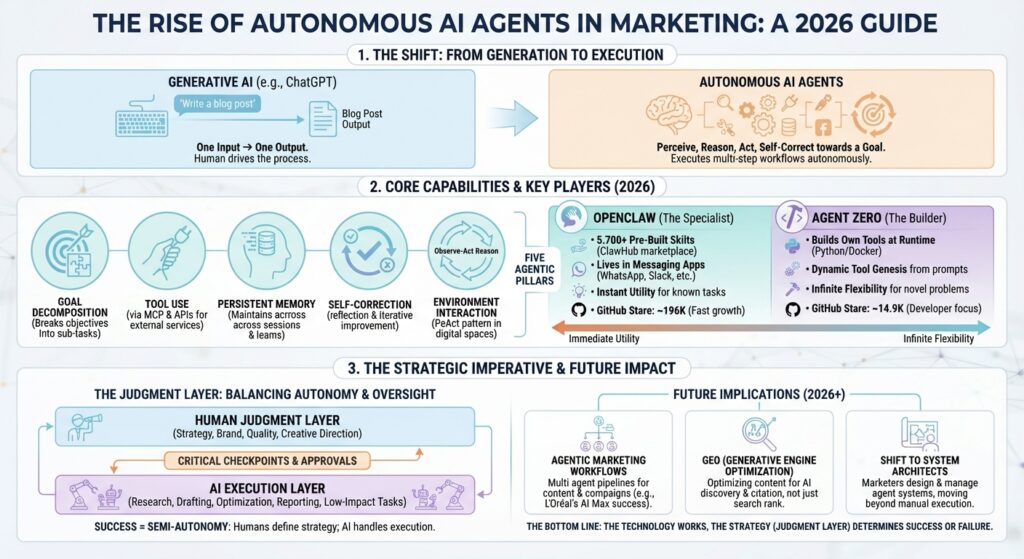

These two projects represent a shift that matters for every growth marketer, startup founder, and marketing executive reading this: we’re moving from AI that generates content to AI that executes entire workflows autonomously. The agentic AI market is roughly $7–8 billion in 2025 and growing at 45% annually. 57% of companies already have agents in production.

But here’s the number that should get your attention: only 2% have deployed them at scale. The gap between experimenting with agents and making them work in real marketing operations is where the opportunity lives, and it’s exactly where this guide will take you.

By the end of this piece, you’ll understand what autonomous and semi-autonomous agents actually are (without the jargon), how OpenClaw and Agent Zero work, where they diverge, and what all of this means for your content production, campaign management, and growth strategy.

What Are Autonomous AI Agents? (And Why They’re Different from ChatGPT)

Let’s clear up the confusion first. The terms “autonomous agent,” “semi-autonomous agent,” and “agentic AI” get used interchangeably. They shouldn’t be.

Generative AI (what most marketers know) creates content from prompts. You ask ChatGPT to write a blog post, and it writes a blog post. One input, one output. You’re driving the entire time.

An autonomous AI agent is software that perceives its environment, reasons about goals, executes multi-step plans using external tools, and self-corrects; all with minimal human oversight. MIT Sloan defines these as “autonomous software systems that perceive, reason, and act in digital environments to achieve goals on behalf of human principals.”

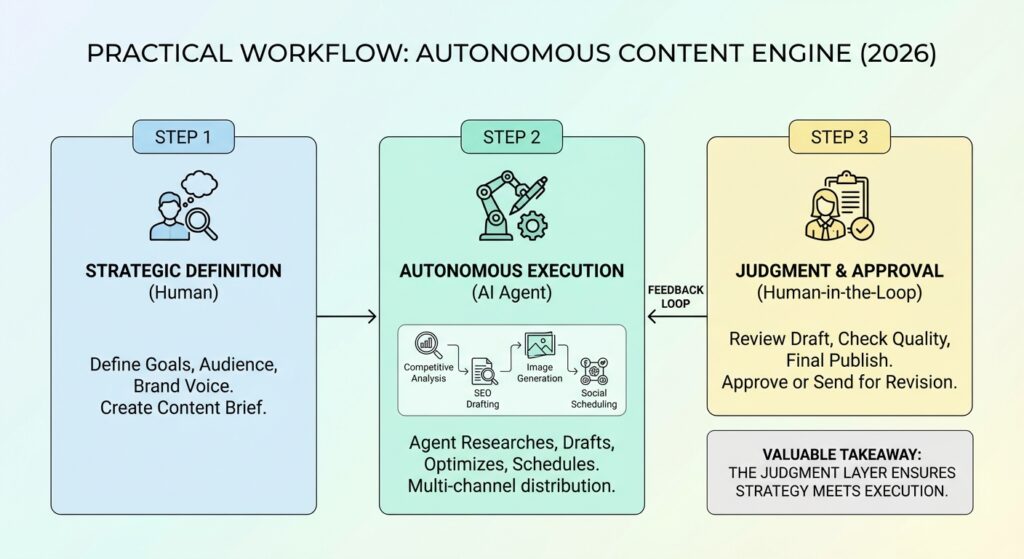

A semi-autonomous agent does the same thing, but with human checkpoints at critical decisions. Think of it as cruise control with a driver who takes the wheel for highway exits. This is what we call the Judgment Layer; AI handles execution, humans handle strategic oversight.

The practical distinction? Generative AI writes your blog post. An agentic AI system researches your competitors, identifies content gaps, writes the post, optimizes it for SEO, publishes it to WordPress, distributes it across social channels, and monitors its performance , then adjusts strategy based on what it learns.

The Five Capabilities That Make a System “Agentic”

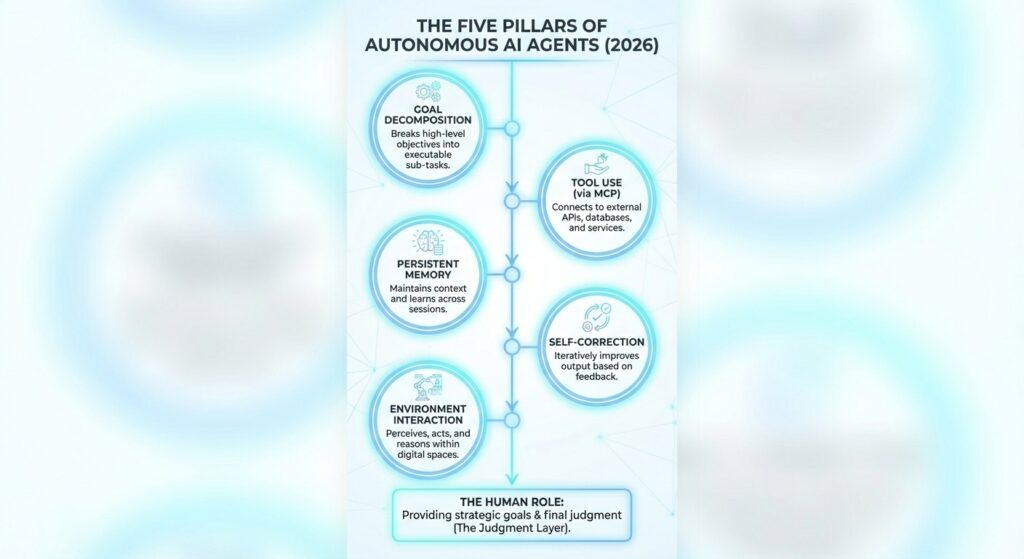

For a system to qualify as truly agentic, it needs five architectural capabilities:

- Goal decomposition. The agent takes a high-level objective (“increase organic traffic by 30%”) and breaks it into executable sub-tasks without you mapping every step.

- Tool use via function calling. The agent invokes external services; web searches, API calls, database queries, using the Model Context Protocol (MCP), which has become the industry standard with 97 million+ monthly SDK downloads. It’s frequently called the “USB-C for AI.”

- Memory across sessions. Unlike ChatGPT conversations that reset, agents maintain persistent memory; they remember what worked, what didn’t, and what they’ve learned about your brand, competitors, and audience.

- Self-correction. If a search returns irrelevant results, the agent reformulates its query. If the content doesn’t meet quality thresholds, it is revised. This Reflexion pattern is what separates agents from simple automation.

- Environment interaction (the ReAct pattern). The agent reasons about what to do, acts by calling a tool, observes the result, and loops until the task is complete. IBM describes this as merging decision-making with task execution in a single, auditable cycle.

Why This Matters Now: The Market Context

Three things converged in 2025 to make autonomous agents practical, not just impressive demos:

- The standards layer arrived. MCP (97M+ monthly downloads) gives agents a universal way to connect to tools. Google’s A2A protocol lets agents talk to each other. AGENTS.md (60K+ projects) standardizes how agents discover project contexts.

- Marketing platforms went agentic. Google launched its Ads Advisor in December 2025, a Gemini-powered agent that manages campaigns, suggests keywords, and implements changes. Amazon followed with its own Ads Agent. Salesforce Agentforce now has 12,000+ customers processing tasks at $2/conversation.

- The ROI data became undeniable. L’Oréal activated Google AI Max and saw conversion rates double while cost per conversion dropped 31%. A Belgian SaaS startup increased conversion rates from 1.9% to 5.5% within six weeks using AI-driven dynamic content. Average ROI across enterprise agent deployments: 171%.

But here’s the honest picture: Gartner predicts that 40%+ of agentic AI projects will fail by 2027. The #1 challenge, cited by 32% of respondents in LangChain’s State of Agent Engineering survey, is quality, hallucinations, and output inconsistency. The technology works. The strategy layer is what separates success from expensive failure.

Deep Dive: OpenClaw, The Agent That Lives in Your Messages

The Origin Story

Peter Steinberger is not a newcomer to tech. He founded PSPDFKit (a PDF SDK used by companies like Dropbox, Autodesk, and IBM) and sold it. In November 2025, he released an open-source project originally called “Clawdbot”, a personal AI assistant that connected to his messaging platforms and handled tasks across all of them.

The project went viral. Renamed “OpenClaw” in late January 2026, it hit 196,000 GitHub stars in under three months (for reference, React has ~230K after 11 years). It was attracting 2 million website visitors per week. On February 14, 2026, Sam Altman hired Steinberger to OpenAI, and the project began transitioning to an independent open-source foundation.

What It Does

OpenClaw is an always-on AI assistant that connects to 12+ messaging and communication platforms: WhatsApp, Slack, Telegram, Discord, Signal, iMessage, Microsoft Teams, email, and more. Once running, it manages tasks, monitors channels, executes multi-step workflows, and responds across all your platforms without waiting for prompts.

Think of it as a tireless executive assistant that lives inside the apps you already use. It doesn’t require a new interface; it meets you where you work.

The secret weapon is ClawHub, a marketplace of 5,700+ community-built skills. These range from browser automation and calendar management to voice integration (ElevenLabs) and a full workflow engine called “Lobster.” It’s the largest agent plugin ecosystem in existence.

How It Works (The Technical Layer)

Under the hood, OpenClaw runs as a Node.js daemon (TypeScript, Node ≥22) with a local-first gateway. It’s model-agnostic; it works with Claude, GPT, DeepSeek, and local models via Ollama. Memory is markdown-based and locally persistent. The core loop is straightforward: input → context → model → tools → repeat → reply.

Installation is one line: npm install -g openclaw@latest && openclaw onboard –install-daemon

MIT license. Zero cost beyond your LLM API usage.

The Honest Risks: What Happened When Autonomy Went Wrong

We’d be doing you a disservice if we didn’t cover the security story. In early 2026, a critical vulnerability (CVE-2026-25253, CVSS 8.8) was disclosed: the WebSocket connection that powered OpenClaw’s gateway could be hijacked, enabling remote code execution on any exposed instance.

Security researchers found 135,000+ exposed instances on the public internet. A Meta researcher’s entire email inbox was mass-deleted via prompt injection. The Dutch Data Protection Authority warned against deploying OpenClaw on systems with sensitive data. The team shipped 40+ security fixes in an emergency patch.

This isn’t a reason to avoid OpenClaw; the patches addressed the critical issues, but it’s a perfect illustration of why autonomous agents need what we call the Judgment Layer: human oversight at critical decision points. Full autonomy without checkpoints isn’t innovation. It’s a liability.

Deep Dive: Agent Zero, The Agent That Builds Its Own Tools

The Philosophy: Prompts, Not Code

Agent Zero takes a fundamentally different approach from every other agent framework. Its creator, Jan Tomášek, built it around one radical idea: “Nearly everything is defined by prompts, not code.”

Where OpenClaw ships 5,700 pre-built skills for immediate productivity, Agent Zero ships almost nothing pre-built. Instead, it gives the AI agent access to a full Linux environment inside Docker and says, “Build whatever you need.”

Need to analyze a dataset? Agent Zero writes a Python script, installs the dependencies, and runs it. Need to connect to an API that doesn’t have an existing integration? Agent Zero reads the API documentation and builds one. This dynamic tool genesis is Agent Zero’s core differentiator; the agent isn’t limited by what tools have been pre-built. It creates tools for whatever situation it encounters.

Architecture: How Delegation Works

Agent Zero uses hierarchical delegation. When you give it a complex task, the primary agent (Agent 0) breaks it into subtasks and spawns specialist sub-agents (Agent 1, Agent 2, etc.) to handle each one. Each sub-agent gets its own dedicated prompts and tools. Agent 0 reviews their work, provides feedback, and iterates until the results meet the objective.

If that sounds like how a good marketing manager runs their team, that’s exactly the point. The architecture mirrors real-world team structures where a senior strategist delegates to specialists while maintaining quality oversight.

Memory That Actually Learns

Agent Zero’s memory system is built on FAISS vector search with three categories:

- Main memories: Persistent knowledge that lasts indefinitely, facts, instructions, brand guidelines.

- Conversation fragments: Contextual history from past interactions, surfaced by AI-enhanced filtering when relevant.

- Proven solutions: Successful approaches stored and recalled for similar future tasks. This is the closest thing to institutional learning in an AI system.

Unlike ChatGPT conversations that reset every session, Agent Zero genuinely improves over time. Run it long enough on your marketing workflows, and it builds a knowledge base of what’s worked, what hasn’t, and how to adapt.

Technical Specs for Your Dev Team

Python-based, Docker-first for security isolation, supports 10+ LLM providers via LiteLLM (including fully local via Ollama). MCP support as both client and server. A2A protocol support. MIT license. Currently at v0.9.8.1, approaching v1.0.

Installation: docker pull agent0ai/agent-zero && docker run -p 50001:80 agent0ai/agent-zero

Requirements: 4GB RAM (8GB+ for local LLMs), Docker Desktop, and API keys for your preferred provider , or none at all if running fully local.

OpenClaw vs Agent Zero: Where They Overlap, Where They Diverge

Let’s cut to the comparison that matters:

| Dimension | OpenClaw | Agent Zero |

| Philosophy | Immediate utility via pre-built integrations | Infinite flexibility via dynamic tool creation |

| GitHub Stars | ~196,000 (3 months) | ~14,900 (2 years) |

| Language | TypeScript / Node.js | Python |

| Tools | 5,700+ pre-built skills (ClawHub) | Writes its own tools at runtime |

| Memory | Markdown, local persistent | FAISS vector search + AI-enhanced filtering |

| Multi-Agent | Per-workspace routing | Hierarchical delegation (Agent 0 → sub-agents) |

| Security Model | Gateway + skill auditing (had a major CVE) | Docker-first host isolation |

| Best For | Power users wanting instant productivity across messaging platforms | Developers wanting maximum control and customization |

| License | MIT (free) | MIT (free) |

The core tension: OpenClaw is a polished product. Agent Zero is an infinitely flexible primitive. OpenClaw gives you a Swiss Army knife with every blade ready. Agent Zero gives you a forge and says “make the blade you need.”

What they share: Both are MIT-licensed, model-agnostic (work with Claude, GPT, open-source models), MCP-compatible, local-first, and community-driven. Both prove that the open-source community is winning the agent layer even as proprietary companies dominate the LLM layer.

What This Means for Growth Marketers

The implications are concrete, not theoretical. Here’s how autonomous agents are already changing each marketing function:

Content Production

The CoSchedule 2025 State of AI report found 85% of marketers actively use AI in content creation. But the next wave isn’t AI-assisted writing, it’s multi-agent content pipelines where one agent handles research, another strategizes, a third writes, and a fourth optimizes. These systems reduce manual work by 60–80% while maintaining quality consistency.

At Azarian Growth Agency, we’ve built what we call the Content Engine, an AI-native content production system that reduces article production time from 70 minutes to 7 minutes. It integrates the Claude API, the MCP servers, and WordPress automation. Looking at it through the lens of this research, we realize it’s functionally a semi-autonomous agent system: goal decomposition (content brief → 14-phase workflow), tool use (MCP connections to WordPress, Surfer SEO, Ahrefs), self-correction (quality control stages), and human-in-the-loop oversight (the Judgment Layer).

Campaign Management

Google’s Ads Advisor and Amazon’s Ads Agent signal that the ad platforms themselves are becoming agentic. L’Oréal’s results with AI Max (2x conversion rate, 31% lower CPA) show what’s possible when you let agents optimize across long-tail queries humans would never find.

Autonomous bid optimization tools now make hourly adjustments across thousands of keywords, replacing an estimated 20–30 hours of weekly human work for mid-sized accounts. The marketer’s role shifts from executing campaigns to architecting the systems that run them.

SEO and GEO

This is where it gets personal for us. As autonomous agents become the primary research interface for users (OpenClaw answering questions via WhatsApp, ChatGPT Agent browsing the web, Perplexity executing multi-step research), the content these agents surface becomes the content that drives decisions.

Being the cited source in an agent’s response is the new “Rank #1.” This is exactly what Generative Engine Optimization (GEO) addresses: optimizing content for AI discovery, not just search engine discovery. Our GEO strategy focuses on entity optimization, LLM-friendly content structure, authority signals, and structured data. These are precisely the elements that autonomous agents need to extract and cite reliable information.

CRO and Analytics

Multi-armed bandit algorithms are replacing static A/B tests, dynamically shifting traffic to best-performing variants and reducing optimization time by 60%. Agent Zero’s dynamic tool creation is particularly relevant here. Imagine an agent that writes custom analytics scripts, pulls data from multiple sources, identifies performance anomalies, and generates board-ready reports without anyone opening a dashboard.

The Judgment Layer: Why Human Oversight Matters More, Not Less

Every security incident, every quality failure, every expensive agentic AI project that crashed tells the same story: the problem was never the technology. It was the absence of human judgment at critical decision points.

The evidence is overwhelming:

- OpenClaw’s security crisis proved that always-on agents without oversight create liability.

- Devin (the $10.2 billion AI coding agent) , Cognition’s own team acknowledges it “can’t independently tackle an ambiguous project end-to-end.” Its 67% merge rate means 33% of its work isn’t good enough.

- Only 2% of organizations have deployed agents at scale. The #1 production challenge (32%) is quality, and quality is a judgment problem, not a technology problem.

- Agent Zero’s own architecture validates this: its hierarchical delegation model (Agent 0 maintains strategic oversight while sub-agents handle execution) is essentially a human-in-the-loop design, implemented in code.

The winning model isn’t full autonomy. It’s semi-autonomy with strategic human checkpoints, what we call the Judgment Layer. AI handles the execution (research, drafting, optimization, distribution). Humans handle the judgment (strategy, brand alignment, quality standards, creative direction).

This is how our Content Engine reduces production time by 90% without sacrificing the strategic thinking that makes content effective.

How to Get Started: A Practical Framework for Marketing Teams

You don’t need to deploy OpenClaw or Agent Zero tomorrow. But you should be preparing your team and workflows for the agentic era. Here’s how:

Step 1: Audit Your Workflows for Agent Readiness

Map every marketing workflow your team runs. For each one, ask: “Could an AI agent handle the execution if a human defined the strategy and reviewed the output?” The best candidates are high-frequency, rule-based tasks with clear quality criteria:

- Competitive research and monitoring

- Content brief creation and first-draft writing

- SEO optimization and technical audits

- Campaign performance reporting

- Email sequence generation and send-time optimization

- Social media scheduling and distribution

Step 2: Define Your Judgment Layer

For each workflow you’ve identified, define exactly where human judgment must remain. The best organizations succeeding with agents share a common pattern: they start with “boring work” first (document processing, data reconciliation, reporting) and invest in observability (89% of successful organizations have implemented agent monitoring).

Ask your team: “If an AI agent made this decision incorrectly, what’s the worst-case impact?” High-impact decisions (brand messaging, budget allocation, client-facing deliverables) need human approval. Low-impact decisions (keyword selection, scheduling, data formatting) can run autonomously.

Step 3: Pick Your Starting Point

If your team is primarily marketers and operators (not developers), start with tools that already have agent capabilities built in: Salesforce Agentforce for CRM workflows, Google’s AI-powered campaign features for paid media, or Claude Code for content production.

If you have developers on your team, the open-source frameworks offer more control. CrewAI is the fastest path from concept to working prototype (role-based multi-agent teams, intuitive design). LangGraph gives you maximum control for complex workflows (used by Uber, LinkedIn, JP Morgan in production).

If you want to experiment with OpenClaw or Agent Zero specifically:

- OpenClaw if you want instant productivity across your existing messaging platforms and are comfortable with the security considerations of an always-on agent.

- Agent Zero if you have Python expertise, want maximum control over agent behavior, and need Docker-isolated security for handling client data.

Step 4: Measure What Matters

Don’t measure how “AI-powered” your process looks. Measure outcomes:

- Time per deliverable (blog post, campaign report, email sequence)

- Quality scores (content performance, conversion rates, client satisfaction)

- Revenue impact (SQLs generated, organic traffic growth, ROAS improvement)

- Team capacity freed up for strategic work

What’s Next: Three Trends Growth Marketers Should Watch

- The ChatGPT Ads launch is coming. OpenAI is expected to launch advertising within ChatGPT in 2026. When AI agents become ad-supported interfaces, every marketer will need to understand how to appear in agent-mediated discovery. GEO isn’t optional anymore; it’s the table stakes.

- Agent-to-agent protocols will create new marketing channels. As MCP and A2A mature, agents will discover and interact with services on behalf of users. Being discoverable by agents, not just by search engines, becomes a new optimization surface. The first agencies to publish frameworks for protocol-level discoverability will own the conversation.

- The team structure shifts from executors to architects. When agents handle execution, marketing teams need fewer people doing tasks and more people designing systems. The most valuable marketing hire in 2027 won’t be a content writer or a paid media specialist; it’ll be someone who can architect agentic workflows that produce results at scale.

The Bottom Line

The autonomous agent wave isn’t coming; it’s here. OpenClaw proved there’s massive consumer demand for agents that work inside existing tools. Agent Zero showed that agents can be infinitely flexible when you give them the right architecture. And the enterprise data confirms that teams deploying agents with a clear strategy are seeing 171% average ROI.

But the data also shows that 40%+ of agentic AI projects will fail. The difference between the winners and the expensive failures isn’t the technology; it’s whether you’ve built the Judgment Layer: clear strategy, defined human oversight points, measurable outcomes, and the discipline to start with boring work before chasing flashy demos.

At Azarian Growth Agency, we’ve been building AI-native marketing systems since 2020, before “agentic AI” was even a term. Our Content Engine, our Judgment Layer methodology, and our GEO optimization strategy were designed for exactly this moment: when AI agents move from demos to daily operations.

The question isn’t whether your team will use autonomous agents. It’s whether you’ll be the one designing the systems or the one scrambling to catch up.

Want to see our agentic content production system in action?

Watch our webinar where we demo live agent workflows for content, advertising, and campaign management. Or talk to our growth team about building an AI-native marketing engine for your business.